Authors: Marta Bobyk and Solomiya Kubinska The new era of Conversational AI and Automation is here – are you with…

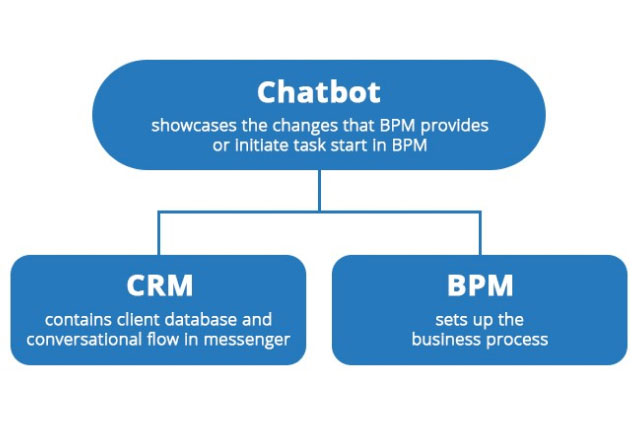

Almost any 21st-century project requires flexibility and scalability from an architectural point of view. Especially when it comes to chatbot…

The step-by-step guide is created for business owners and project managers who want to get a verified Twilio number. Mostly,…

Article 1 The key role of charts in our stock analysis-oriented project should definitely be highlighted as they are the…

In this publication series, we’re going to cover our best practices used during developing IT projects. If you’ve ever had…

WhatsApp is on everybody’s A-list. It’s nothing new that WhatsApp is on the hype and most companies want to implement…

Have you ever met really stupid chatbots, that don’t understand what you want and thus pretend to have Intelligence? I…

HubSpot recently published a course for Conversational UI that is a good place to understand how the top marketing players are thinking…

Operations Management in Which People Use Various Methods to Discover, Model, Analyze, Measure, Improve, Optimize, and Automate Business Processes. Business…